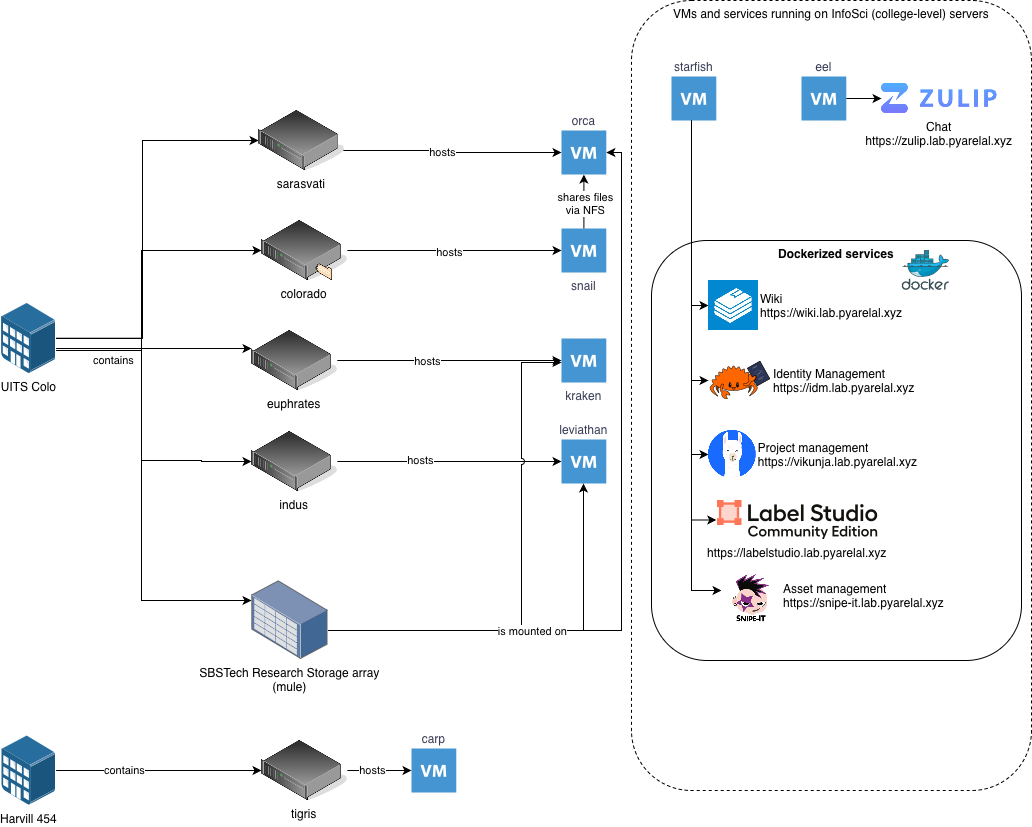

| VM Name | CPU | RAM | GPUs |

| kraken | AMD EPYC 7662 64-Core Processor (2.0 GHz) | 720 GB | 2x NVIDIA A100 (40 GB) |

| leviathan | AMD EPYC 7763 64-Core Processor (2.45 GHz) | 720 GB | 6x NVIDIA RTX A6000 |

| carp | AMD EPYC-Rome Processor | 95 GB | 1x NVIDIA GeForce RTX 3090 |

| orca | AMD EPYC 9474f, 48-core, 3.60 GHz, 256MB cache | 1.5 TB (tentative) | 2x NVIDIA H100 NVL (94GB hbm3, PCIE 5.0 x16) |

| Host | GPU monitoring |

|---|---|

| orca | Yes (NVIDIA) |

| kraken | Yes (NVIDIA) |

| leviathan | Yes (NVIDIA) |

| starfish | No |

| eel | No |